I’ve just read the AI forum report analysing the impact and opportunity of artificial intelligence within New Zealand. This was released last week. At 108 pages it’s a substantial read. You can see the full report here.

The timing of this report is very good. There is a lot of news about AI and a growing awareness of it. But at the same time, I believe there is a lack of understanding of what AI is capable of and how organisations can take advantage in the recent advances.

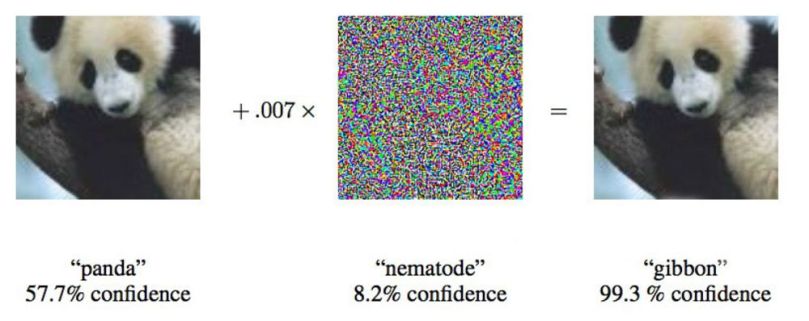

I think the first level of misunderstanding is that people over estimate what the technology can do. This is driven by science fiction, a misinformed media and fuelled by marketers who want their company and products to be seen to be using AI. AI is nowhere near human level intelligence and doesn’t understand concepts like a human (see my post on the limits of deep learning). That may change, but major breakthroughs are needed and it’s not clear when or if those will occur (see predictions from AI pioneer Rodney Brooks for more on this).

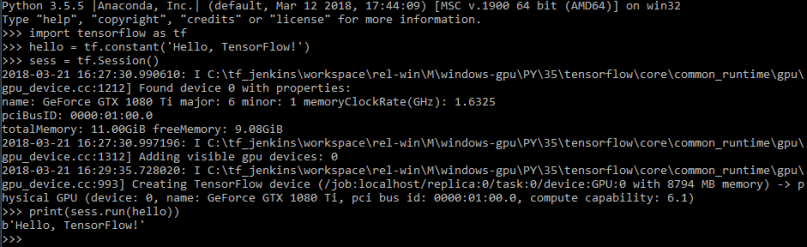

Although AI does not have human level intelligence, there are a host of applications for the technology. I think the second level of misunderstanding is around how difficult and expensive it is to take advantage of this AI technology. The assumption is that it’s expensive and you need a team of “rocket scientists”. From what I’ve seen studying deep learning and talking to NZ companies that are using AI, the technology is very accessible and the investment required is relatively small.

The report is level-headed: it’s not predicting massive job losses. I’m not going to comment further on the predictions on the economic impact. They’ll be wrong – because, to quote Niels Bohr – predicting is very difficult, especially about the future.

In my opinion the report did not place enough emphasis on the importance of deep learning. The recent rise of this technology has driven the resurgence of AI in recent years. Their history of AI missed the single most important event which was the AlexNet neural network winning the ImageNet competition. This bought deep learning to the attention of the worlds AI researchers and triggered a tsunami of research and development. I would go so far as to suggest that the majority of the focus on AI should be on deep learning.

The key recommendation of the report is that NZ needs to adopt an AI strategy. I agree. Of the 6 themes they suggested I think they key ones are:

- Increasing the understanding of AI capability. This should involve educating the decision makers at the board and executive level about the opportunities to leverage AI technology and the investment required. The outcome of this should be more organisations deciding to invest in AI.

- Growing the capability. NZ needs more AI practitioners. While we can attract immigrants with these skills, we also need to educate more people. I was encouraged to see the report advocating the use of online learning. I agree that NZQA should find a way to recognise these courses but think we should go further. Organisations should be incentivised to train existing staff using these courses (particularly if they have a project identified) and young people should be subsidised to study AI either online or undergrad/postgrad at universities.

I am less worried about the risks. I think it would be good to have AI that was biased, opaque, unethical and breaking copyright law. At least then we would be using the technology and we could address those concerns as they came up. I am also not worried about the existential threat of AI. First, I think human level intelligence may be a long time away. Second, I’m somewhat fatalistic – I can’t see how you could stop those breakthroughs from happening. We need to make sure that humans come along for the ride.

From my perspective the authors have done a very good job with this report. I encourage you to take the time to read it. I encourage the government to adopt its recommendations.

An interview with Jeff Dean, Google Senior Fellow and head of the company’s deep learning research team Google Brain, on core machine learning innovations from Google and future directions. This guy is a legend.

An interview with Jeff Dean, Google Senior Fellow and head of the company’s deep learning research team Google Brain, on core machine learning innovations from Google and future directions. This guy is a legend.

The first article was by Douglas Hofstadter, a professor of cognitive science and author of Gödel, Escher, Bach. I read that book many years ago and remember getting a little lost. However, his recent article titled

The first article was by Douglas Hofstadter, a professor of cognitive science and author of Gödel, Escher, Bach. I read that book many years ago and remember getting a little lost. However, his recent article titled  Their is a lot of hype around artificial intelligence, what the technology will bring and its impact on humanity. I thought I’d start my blogging by highlighting some more grounded predictions from someone who has a lot of experience at the practicalities of AI implementation: Rodney Brooks. Rodney is a robotics pioneer who co-founded

Their is a lot of hype around artificial intelligence, what the technology will bring and its impact on humanity. I thought I’d start my blogging by highlighting some more grounded predictions from someone who has a lot of experience at the practicalities of AI implementation: Rodney Brooks. Rodney is a robotics pioneer who co-founded