I recently had the chance to catch up with Professor Bruce MacDonald who chairs the Auckland University Robotics Research Group. Although we had never met before, Bruce and I have a connection, having the same PhD supervisor, John Andreae.

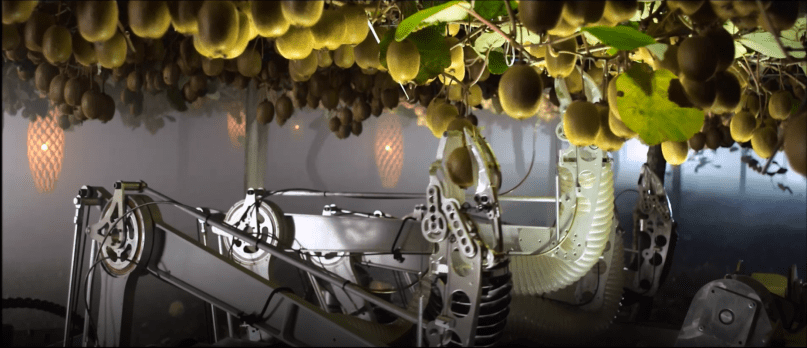

Bruce took me through some of the robotics projects that him and his team have been working on. The most high profile project is a kiwifruit picking robot that has been a joint venture with Robotics Plus, Plant and Food Research, and Waikato University. This multi armed robot sits atop an autonomous vehicle that can navigate beneath the vines. Machine vision systems identify the fruit and obstacles and calculate where they are relative to the robot arm which is then guided to the fruit. A hand gently grasps the fruit and removes it from the vine using a downward twisting movement. The fruit then rolls down a tube.

The work has been split between the groups with the Auckland University team focused on the machine vision and vehicle navigation, Waikato on the control electronics and software, and Robotics Plus on the hardware. The team estimates that the fruit picking robot will be ready to be used in production in a couple of years. The current plan is to use it to provide a fruit picking service for growers. This way their customers don’t need to worry about robot repairs and maintenance and the venture can build a recurring revenue base. They are already talking to growers in New Zealand and the USA.

Along with Plant and Food Research, the group is also researching whether the same platform can be used to pollinate the kiwifruit flowers. Declining bee populations are expensive to maintain, and this may provide a cost effective alternative.

The group has just received funding of $17m to improve worker performance in orchards and vineyards. The idea is to use machine vision to understand what expert pruners do and translate that into a training tool for people learning to prune and for an automated robot.

Bruce’s earlier work included the use of robotics in healthcare. This included investigating if robots could help people take their medication correctly and the possibility of robots providing companionship to those with dementia who are unable to keep a pet.

I asked Bruce whether Auckland University taught deep learning at an undergraduate level. He said that they don’t, but it is widely used by post grad students. They just pick it up.

Bruce is excited by the potential of reinforcement learning. We discussed whether there is the possibility of using our supervisor’s goal seeking PURR-PUSS system with modern reinforcement learning. I think there is a lot of opportunity to leverage some of this type of early AI work.

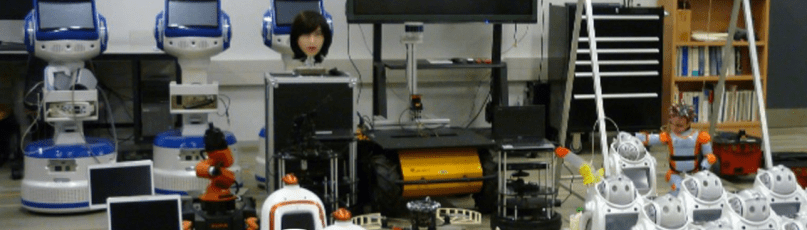

At the end of the meeting Bruce showed me around the robotics lab at the new engineering school. It was an engineer’s dream – with various robot arms, heads, bodies, hands and rigs all over the place. I think Bruce enjoys what he does.

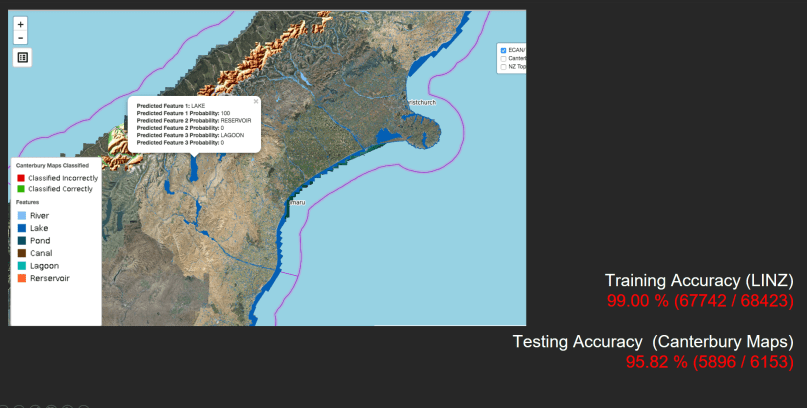

The water classification is very similar, working with 96 million pixel(12000×8000) images, but with smaller resolution 30x30cm pixels. The output is the set of polygons representing the water in the aerial images, but the model also classifies the type of water body, e.g. a lake, lagoon, river, canal, etc.

The water classification is very similar, working with 96 million pixel(12000×8000) images, but with smaller resolution 30x30cm pixels. The output is the set of polygons representing the water in the aerial images, but the model also classifies the type of water body, e.g. a lake, lagoon, river, canal, etc.